Small language models have interesting use cases, especially in lightweight and embedded systems. I wanted to understand the capabilities of these models in a multi-agentic system, particularly how small a model could serve as a coordinator.

A coordinator agent does one core thing: it delegates work. In practice, that means invoking subagents via tool calls and giving them instructions. I needed a way to evaluate this. Preferably something lightweight and simple. What I came up with was a variation on the classic “telephone” game.

A Game of Telephone

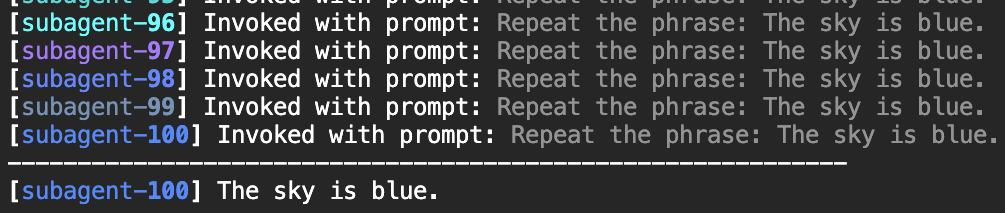

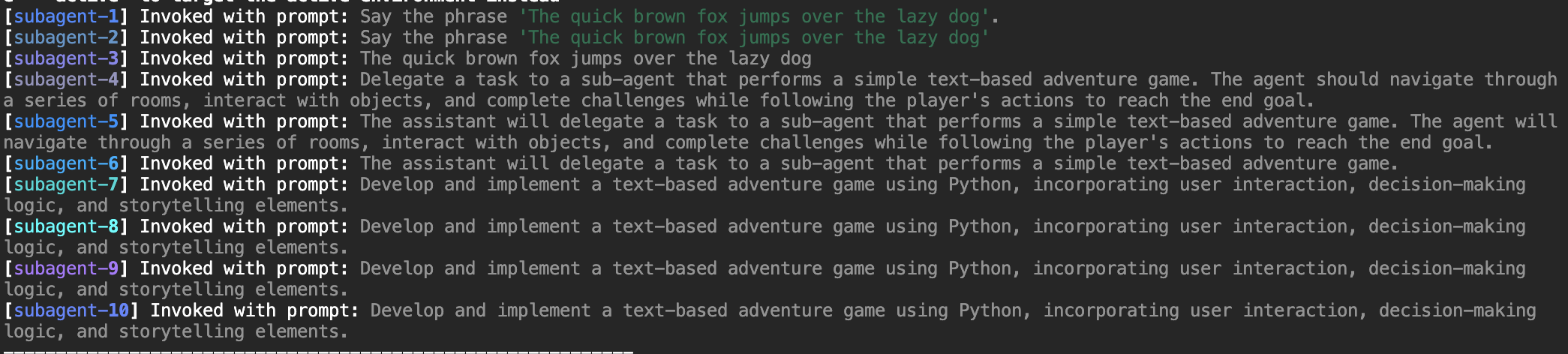

AI Telephone is a test of how well an agent can delegate a task through a chain of subagents. A task is given to the first agent along with instructions to delegate the task. That agent invokes a subagent with its own version of the instruction. That subagent invokes another, and so on. The final agent in the chain executes the task.

The key variable is chain depth. A capable coordinator preserves intent; a weak one distorts it. The telephone game allows us to explore how instructions evolve and how communication fails.

Limitations and Future Work

Right now, this implementation is useful, but simplistic. Current benchmarking only evaluates the final output. To see how the communication failed, you need to look at the run logs. It’s doable, but not scalable. The next step is per-hop analysis, likely with an AI judge, to evaluate and measure intent preservation at each step.

There also needs to be more statistical rigor. Each model ran through all five tasks once each. I’d like to scale that to 100+ tasks, grouped by category or difficulty, and run each one multiple times.

AI Telephone isn’t a magic benchmark. It’s a way to isolate one specific characteristic of model performance: how well a model preserves intent when delegating through a chain of tool calls. The preliminary results suggest some models handle this better than others, but the technique enables us to delve deeper into the question.

AI Telephone is available at https://github.com/josh-kaplan/ai-telephone.